Modeling your data with dbt

👉 Take me there! 👈

dbt enables analytics engineers to transform data in their warehouses by simply writing select statements. Snowplow has written and maintain a number of dbt packages to model your snowplow data for various purposes and produce derived tables for use in analytics, AI, ML, BI, or reverse ETL tools.

Using Snowplow's dbt packages means you can draw insight and value from your data quicker, easier, and cheaper than building your own modeling from scratch.

To setup dbt, Snowplow open source users can start with the dbt User Guide and then we have prepared some introduction videos for working with the Snowplow dbt packages.

For Snowplow BDP customers, dbt projects can be configured and scheduled in the console meaning you can get started running dbt models alongside your Snowplow pipelines.

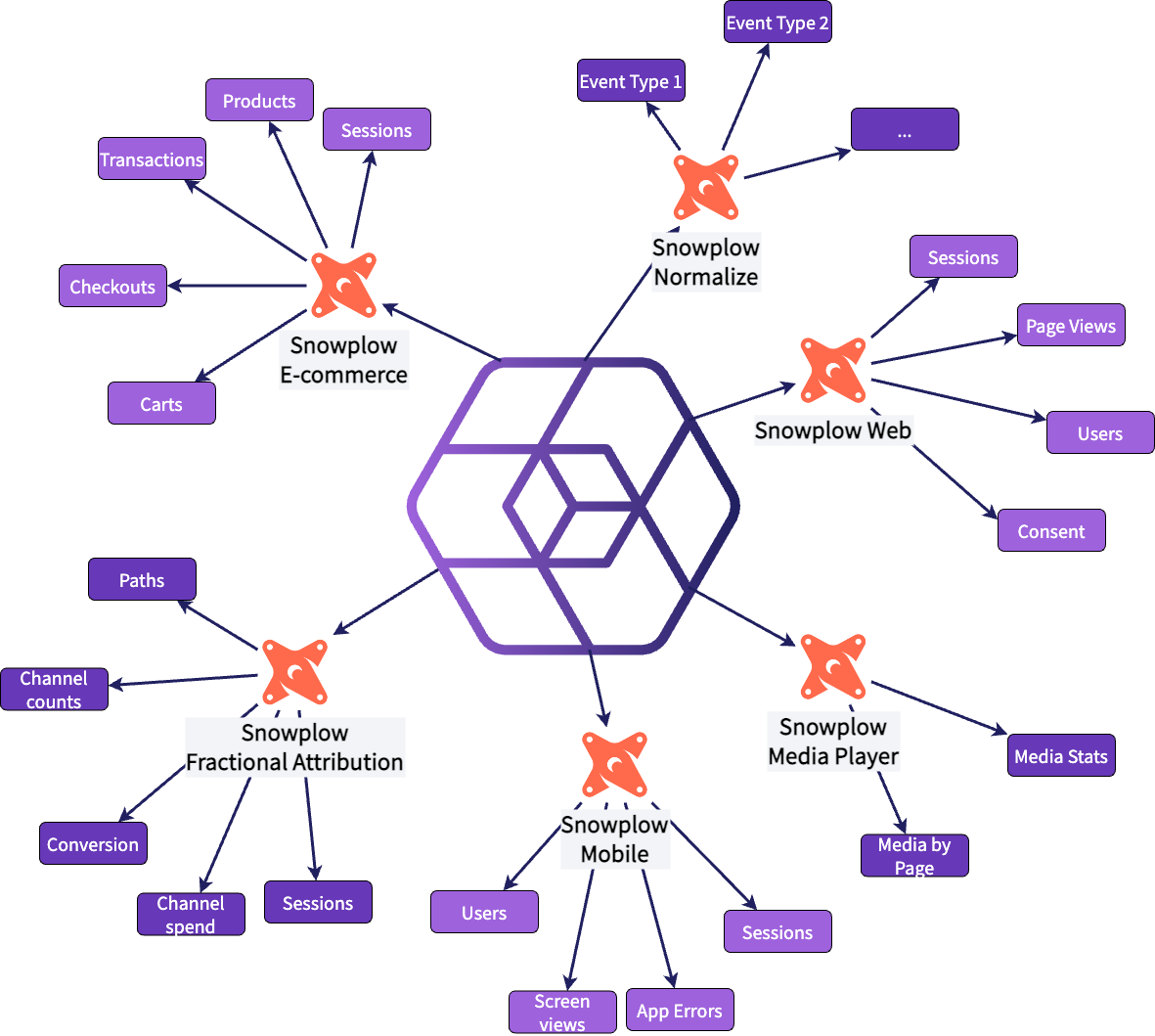

Snowplow dbt Packages

Our dbt packages come with powerful built-in features such as an optimization to the incremental materialization to save you cost on warehouse compute resources compared to the standard method, a custom incremental logic to ensure we process just the required data for each run and keep your models in sync, plus the ability to build your own custom models using both of these!

There are 5 core snowplow dbt packages:

- Snowplow Web (dbt model docs): for modeling your web data for page views, sessions, users, and consent

- Snowplow Mobile (dbt model docs): for modeling your mobile app data for screen views, sessions, users, and crashes

- Snowplow Media Player (dbt model docs): for modeling your (web-based) media elements for play statistics

- Snowplow E-commerce (dbt model docs): for modeling your (web-based) E-commerce interactions across carts, products, checkouts, and transactions

- Snowplow Fractribution (dbt model docs): used for Attribution Modeling with Snowplow

The Snowplow Media Player package is designed to be used with the Snowplow Web package and not as a standalone package.

Each package comes with a set of standard models to take your Snowplow tracker data and produce tables aggregated to different levels, or to perform analysis for you. You can also add your own models on top, see the page on custom modules for more information on how to do this.

In addition to the other packages, there is a Normalize package that makes it easy for you to build models that transform your events data into a different structure that may be better suited for downstream consumers.

The supported data warehouses per version can be seen below:

- Snowplow Web

- Snowplow Mobile

- Snowplow Media Player

- Snowplow Normalize

- Snowplow E-commerce

- Snowplow Fractribution

| snowplow-web version | dbt versions | BigQuery | Databricks | Redshift | Snowflake | Postgres |

|---|---|---|---|---|---|---|

| 0.16.0 | >=1.5.0 to <2.0.0 | ✅ | ✅ | ✅ | ✅ | ✅^ |

| 0.15.2 | >=1.4.0 to <2.0.0 | ✅ | ✅ | ✅ | ✅ | ✅^ |

| 0.13.3* | >=1.3.0 to <2.0.0 | ✅ | ✅ | ✅ | ✅ | ✅ |

| 0.11.0 | >=1.0.0 to <1.3.0 | ✅ | ✅ | ✅ | ✅ | ✅ |

| 0.5.1 | >=0.20.0 to <1.0.0 | ✅ | ❌ | ✅ | ✅ | ✅ |

| 0.4.1 | >=0.18.0 to <0.20.0 | ✅ | ❌ | ✅ | ✅ | ❌ |

^ Since version 0.15.0 of snowplow_web at least version 15.0 of Postgres is required, otherwise you will need to overwrite the default_channel_group macro to not use the regexp_like function.

* From version v0.13.0 onwards we use the load_tstamp field so you must be using RDB Loader v4.0.0 and above, or BigQuery Loader v1.0.0 and above. If you do not have this field because you are not using these versions, or you are using the Postgres loader, you will need to set snowplow__enable_load_tstamp to false in your dbt_project.yml and will not be able to use the consent models.

| snowplow-mobile version | dbt versions | BigQuery | Databricks | Redshift | Snowflake | Postgres |

|---|---|---|---|---|---|---|

| 0.7.2 | >=1.3.0 to <2.0.0 | ✅ | ✅ | ✅ | ✅ | ✅ |

| 0.6.3 | >=1.3.0 to <2.0.0 | ✅ | ✅ | ✅ | ✅ | ✅ |

| 0.5.5 | >=1.0.0 to <1.3.0 | ✅ | ✅ | ✅ | ✅ | ✅ |

| 0.2.0 | >=0.20.0 to <1.0.0 | ✅ | ❌ | ✅ | ✅ | ✅ |

| snowplow-media-player version | snowplow-web version | dbt versions | BigQuery | Databricks | Redshift | Snowflake | Postgres |

|---|---|---|---|---|---|---|---|

| 0.5.3 | >=0.14.0 to <0.16.0 | >=1.4.0 to <2.0.0 | ✅ | ✅ | ✅ | ✅ | ✅ |

| 0.4.2 | >=0.13.0 to <0.14.0 | >=1.3.0 to <2.0.0 | ✅ | ✅ | ✅ | ✅ | ✅ |

| 0.4.1 | >=0.12.0 to <0.13.0 | >=1.3.0 to <2.0.0 | ✅ | ✅ | ✅ | ✅ | ✅ |

| 0.3.4 | >=0.9.0 to <0.12.0 | >=1.0.0 to <1.3.0 | ✅ | ✅ | ✅ | ✅ | ✅ |

| 0.1.0 | >=0.6.0 to <0.7.0 | >=0.20.0 to <1.1.0 | ❌ | ❌ | ✅ | ❌ | ✅ |

| snowplow-normalize version | dbt versions | BigQuery | Databricks | Redshift | Snowflake | Postgres |

|---|---|---|---|---|---|---|

| 0.3.2 | >=1.4.0 to <2.0.0 | ✅ | ✅ | ❌ | ✅ | ❌ |

| 0.2.3 | >=1.3.0 to <2.0.0 | ✅ | ✅ | ❌ | ✅ | ❌ |

| 0.1.0 | >=1.0.0 to <2.0.0 | ✅ | ✅ | ❌ | ✅ | ❌ |

| snowplow-ecommerce version | dbt versions | BigQuery | Databricks | Redshift | Snowflake | Postgres |

|---|---|---|---|---|---|---|

| 0.5.3 | >=1.4.0 to <2.0.0 | ✅ | ✅ | ✅ | ✅ | ⚠️ |

| 0.3.0 | >=1.3.0 to <2.0.0 | ✅ | ✅ | ❌ | ✅ | ❌ |

| 0.2.1 | >=1.0.0 to <2.0.0 | ✅ | ✅ | ❌ | ✅ | ❌ |

Postgres is technically supported in the models within the package, however one of the contexts’ names is too long to be loaded via the Postgres Loader.

| snowplow-fractribution version | dbt versions | BigQuery | Databricks | Redshift | Snowflake | Postgres |

|---|---|---|---|---|---|---|

| 0.3.5 | >=1.4.0 to <2.0.0 | ✅ | ✅ | ✅ | ✅ | ❌ |

| 0.3.0 | >=1.4.0 to <2.0.0 | ✅ | ✅ | ❌ | ✅ | ❌ |

| 0.2.0 | >=1.3.0 to <2.0.0 | ✅ | ✅ | ❌ | ✅ | ❌ |

| 0.1.0 | >=1.0.0 to <2.0.0 | ❌ | ❌ | ❌ | ✅ | ❌ |

dbt Version Compatibility Checker

You may be using other dbt packages that require you to use a specific version of dbt, or a specific version of the dbt_utils package. Or you simply may not wish to upgrade your existing setup.

Here’s a way to check the latest version of our packages you can install for a given setup. Simply enter your dbt version and (optionally) dbt_utils version below.

Please enter a valid dbt version.